We are starting a new blog series from today where we plan to present interesting tips and tricks that can help you in managing postgres servers. We recommend you to go through our PgPool series and RDS series if you have not done that already

1) Synchronous_standby_names and its significance

We have seen cases where people think synchronous replication is automatically enabled once they have SYNCHRONOUS_COMMIT set to ‘ON’ . Although synchronous_commit is enabled , you need to configure synchronous_standby_names parameter to use synchronous replication

Here are some of the possible options for synchronous_standby_names :

- ANY 1 (replica1, replica2, replica3, replica4) – Synchronous commit to wait for reply from any one of the four replicas specified . Acknowledgement from any one replica is good enough in this case

- synchronous_standby_names = ‘ANY 2 (*)’ – Synchronous commit to wait for reply from any 2 standby servers. Acknowledgement from any two replicas holds good here

- FIRST 1 (replica1, replica2, replica3, replica4) – Synchronous commit to wait for first successful reply

- synchronous_standby_name=‘replica1’ – Synchronous commit to wait for reply from replica1

2) “\watch” to execute any command at regular intervals

Scenario 1 – Lets say you are monitoring your prod db server and would like to check for active queries every 2 seconds (NOTE: 2 seconds is the default interval for \watch . You can change the interval using something like \watch 30 – This changes it to 30 seconds)

You can give \watch followed by the pg_stat_activity query “select query from pg_stat_activity where state=’active’ “ ;

Scenario 2 – You want to watch the replication status during a manual failover

You can give \watch followed by the pg_stat_replication query” select client_addr,replay_lag,flush_lag,write,lag from pg_stat_replication ;”

Above are two sample scenarios but there are many other scenarios where \watch can be really helpful

3) Using Explain Analyze for INSERT/UPDATE/DELETE statements

EXPLAIN ANALYZE is used to get detailed query plan in Postgres . One difference between EXPLAIN and EXPLAIN ANALYZE is that using ANALYZE will trigger execution of the query . So for SELECT queries it might be ok to use ANALYZE – Always try to run this in preprod or on replica(not actively used by app) instead of primary .But how do we do that for INSERT/UPDATE/DELETE ?

So the trick is to wrap the statement in a transaction block :

BEGIN ;

Run the explain analyze for insert/update/delete statement

ROLLBACK ;

Using above trick is beneficial to run ‘explain analyze’ on INSERT/UPDATE/DELETE statements without causing impact .

- Don’t run explain analyze for INSERT/UPDATE/DELETE without transaction block as depicted above – You could impact app by doing that

- Always use preprod environment to run explain , evaluate for indexes etc, Dont experiment in prod

- Even EXPLAIN on SELECT has to be run in preprod or a prod replica which is not actively used by app

- You can also restore the needed tables to preprod and test it there (If your query is only touching few tables which can be easily restored to preprod)

- Each situation is different – For example one INSERT might only touch ten records whereas another might touch million records . So running EXPLAIN ANALYZE for an INSERT touching millions of records is totally different than a 10 row INSERT

- Please test/measure in preprod , evaluate for index usage etc in preprod and not prod

- Thanks to Postgres Guru Nikolay for adding his inputs – Checkout https://github.com/postgres-ai/database-lab-engine if you are interested . It can be used to create Prod Clone

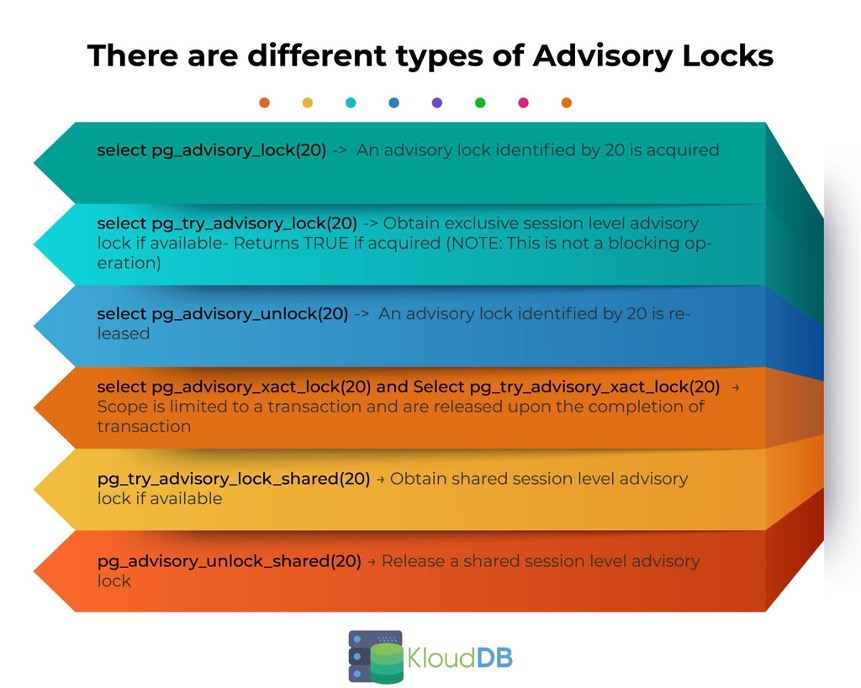

4) Advisory locks in Postgres

Advisory locks are available in Postgres and this is one of the talked about Dev features in addition to CTEs,Window functions etc.System does not enforce their usage ,they are exclusive application locks (Controlled entirely via app code)

Example Scenario – Lets say you need to write to a dynamodb table (or a logfile external to postgres) and you want to prevent two app processes writing at the same time ; you could use advisory locks in this scenario

Some of the common commands are presented here :

5) Delayed Replication

You can have a delayed replica in your cluster to recover data if a release fails , or if there is an accidental delete on a particular table Recovery_min_apply_delay is the parameter that you need to enable . Lets say you set it to ‘16h’ , replica will be 16 hours behind the primary . Having delayed replica can help you in quick recovery – Otherwise you need to do a full restore and extract the needed subset of data (This could take a long time impacting your RTO . Imagine how long it could take if your DB size is in Terabytes)