Table of Contents

What is the multi-instance feature in Pgbouncer ? How is it beneficial ?

PgBouncer supports SO_REUSEPORT socket option, which allows running multiple instances of PgBouncer on the same host(using same port).PgBouncer instances on the same host can solve the single-core bottleneck issue and sometimes improve the TPS

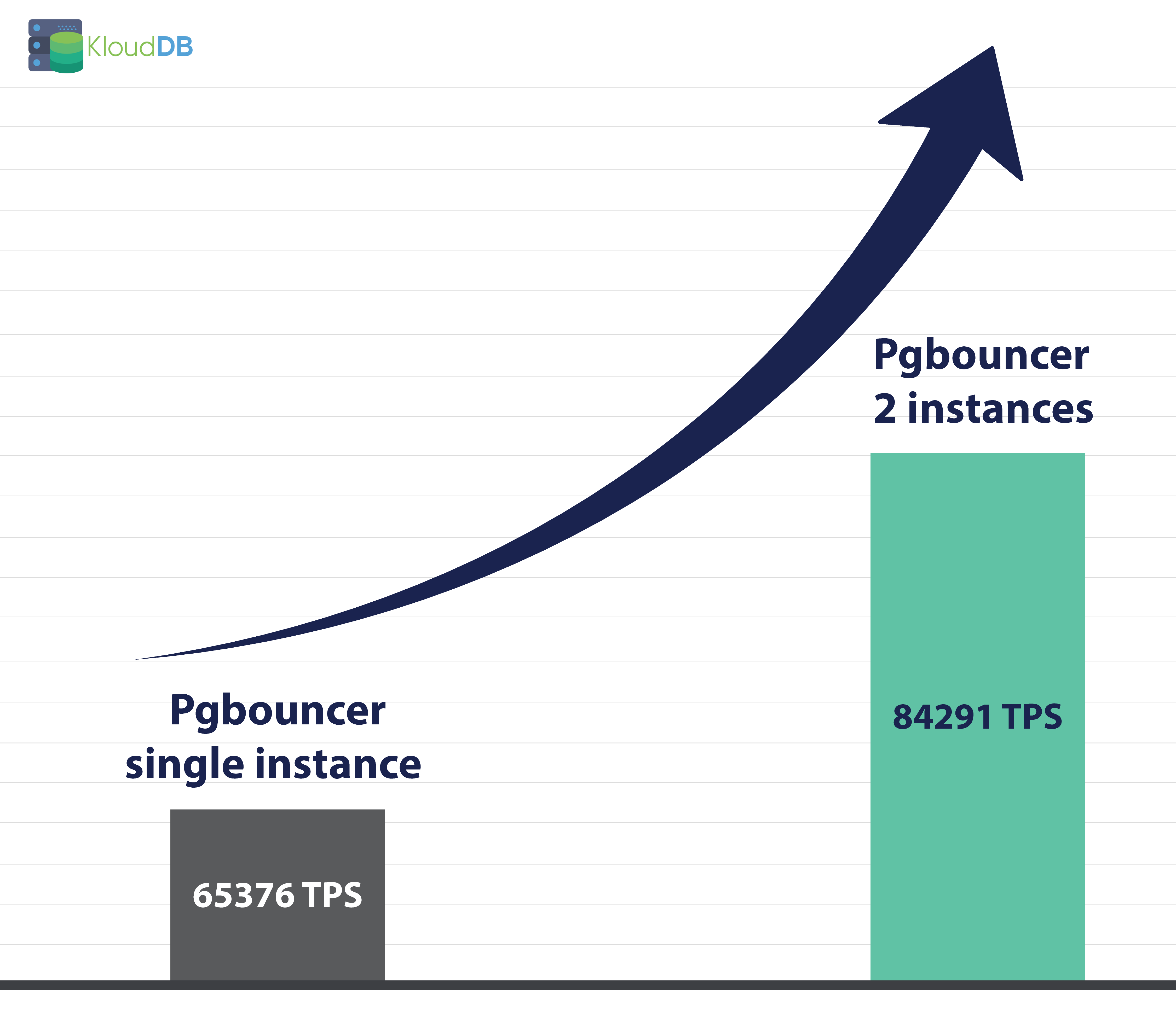

In this blog we are presenting a benchmarking scenario where TPS increased from 65000 to 84000 using multiple instances . Also we are releasing a free utility to deploy multiple pgbouncer instances

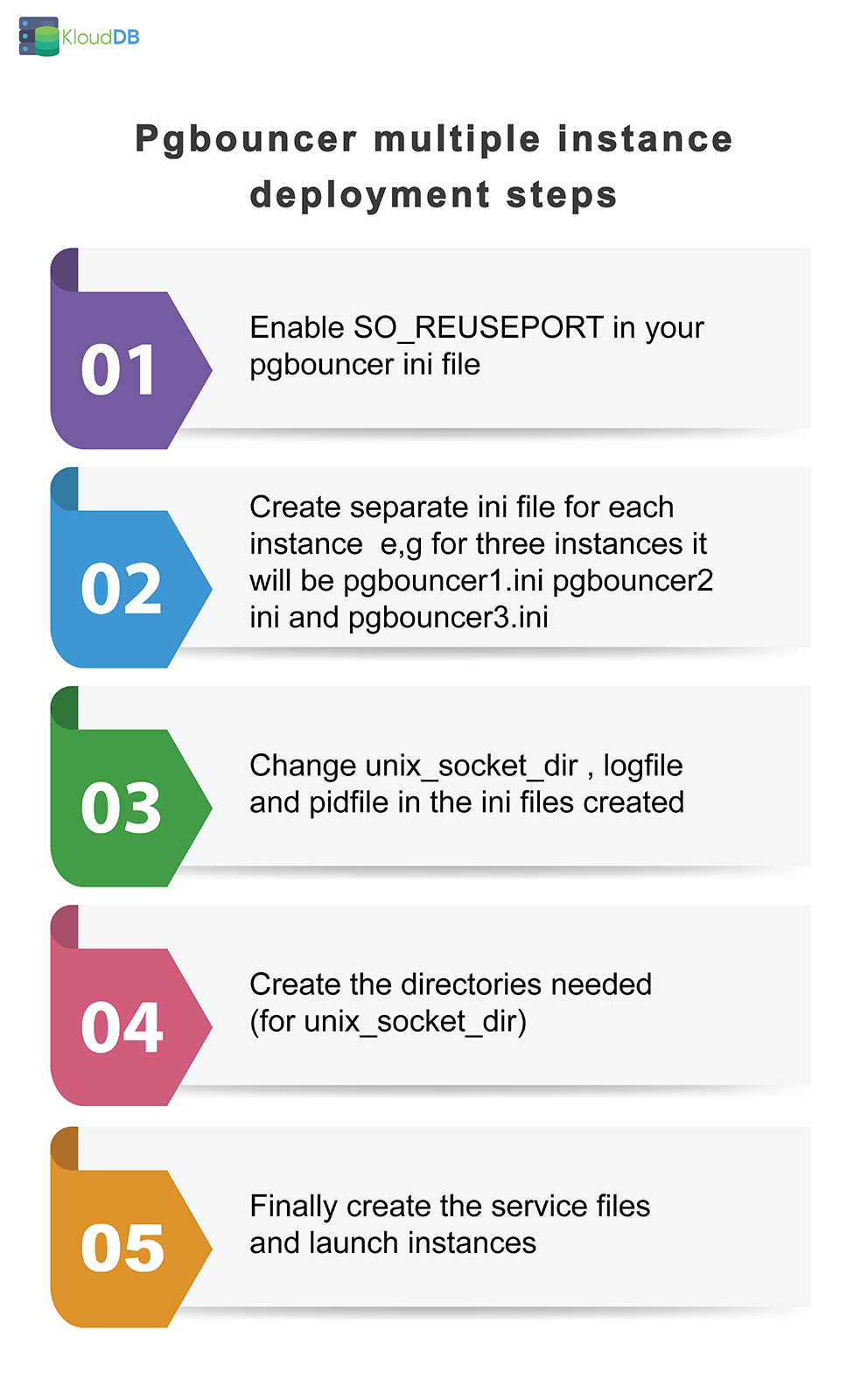

Steps involved to configure multiple pgbouncer instances

Below are the manual steps involved in configuring multiple pgbouncer instances-

2.1 Enable SO_REUSEPORT in your pgbouncer ini file

Please install pgbouncer and make all the changes according to your standards (listen_address , max_client_conn , admin_users etc..) . Also populate userlist.txt . You must enable SO_REUSEPORT to use multiple instances sharing same port

NOTE : It is better to make these changes before cloning ini file for multi instances (It reduces the effort )

2.2 Create separate ini file – If you want three instances you need to create separate ini file for each instance

For example, below are three different ini files for 3 instances( Note : Here the path is /etc/pgbouncer but it can be changed according to your standards)

NOTE : Before cloning the ini file make sure you have all the needed parameters set e.g so_reuseport , max_client_conn etc..

/etc/pgbouncer/pgbouncer1.ini /etc/pgbouncer/pgbouncer2.ini /etc/pgbouncer/pgbouncer3.ini

2.3 Change unix_socket_dir , logfile and pidfile in the three ini files created

Pgbouncer1.ini => unix_socket_dir = /var/run/postgresql/pgbouncer1_sock logfile = /var/log/postgresql/pgbouncer1.log pidfile = /var/run/postgresql/pgbouncer1.pid Pgbouncer2.ini => unix_socket_dir = /var/run/postgresql/pgbouncer2_sock logfile = /var/log/postgresql/pgbouncer2.log pidfile = /var/run/postgresql/pgbouncer2.pid Pgbouncer3.ini => unix_socket_dir = /var/run/postgresql/pgbouncer3_sock logfile = /var/log/postgresql/pgbouncer3.log pidfile = /var/run/postgresql/pgbouncer3.pid

2.4 Create the directories needed (for unix_socket_dir)

mkdir /var/run/postgresql/bouncer1_sock mkdir /var/run/postgresql/bouncer2_sock mkdir /var/run/postgresql/bouncer3_sock

2.5 Finally create the service files and launch instances

/etc/systemd/system/pgbouncer1.service /etc/systemd/system/pgbouncer2..service /etc/systemd/system/pgbouncer3.service

You can paste below code and change PIDFile, ExecStart etc.. in below file

[Unit] Description=pgBouncer Instance 1 After=postgresql.service [Service] Type=forking User=postgres Group=postgres ExecStart=/usr/sbin/pgbouncer -d /etc/pgbouncer/bouncer1.ini ExecReload=/bin/kill -SIGHUP $MAINPID PIDFile=/var/run/postgresql/bouncer1.pid

Once you prepare the service files you can start the instances

Systemctl start pgbouncer1 Systemctl start pgbouncer2 Systemctl start pgbouncer3

Shell script to automate this process

We are releasing a simple shell script that can be used to deploy multiple instances . This is basic version and we plan to enhance it based on user feedback

https://github.com/klouddb/klouddb_tools/tree/main/pgbouncermultiinst

How our TPS numbers increased from 65000 to 84000

In this scenario we used r5d.2xlarge instance for both pgbouncer and postgres instances . We benchmarked using ‘Select only’ workload

Using single pgbouncer instance

Below are the test results using one pgbouncer instance

root@ip-172-31-27-35:/etc/pgbouncer# pgbench -h xx.xx.x.x -p 6432 -U pgadminuser -T 60 -c 100 -S -n postgres Password: pgbench (14.5 (Ubuntu 14.5-1.pgdg20.04+1)) transaction type: scaling factor: 1 query mode: simple number of clients: 100 number of threads: 1 duration: 60 s number of transactions actually processed: 3921532 latency average = 1.530 ms initial connection time = 21.876 ms tps = 65376.373370 (without initial connection time)

Using two pgbouncer instances

Below are the test results using two pgbouncer instances

postgres@ip-172-31-27-35:~$ pgbench -h xx.xx.x.x -p 6432 -U pgadminuser -T 60 -c 100 -S -n postgres Password: pgbench (14.5 (Ubuntu 14.5-1.pgdg20.04+1)) transaction type: scaling factor: 1 query mode: simple number of clients: 100 number of threads: 1 duration: 60 s number of transactions actually processed: 5053292 latency average = 1.186 ms initial connection time = 52.606 ms tps = 84291.274529 (without initial connection time)

As you can see there is a big improvement in this case – 65000 Vs 84000 TPS

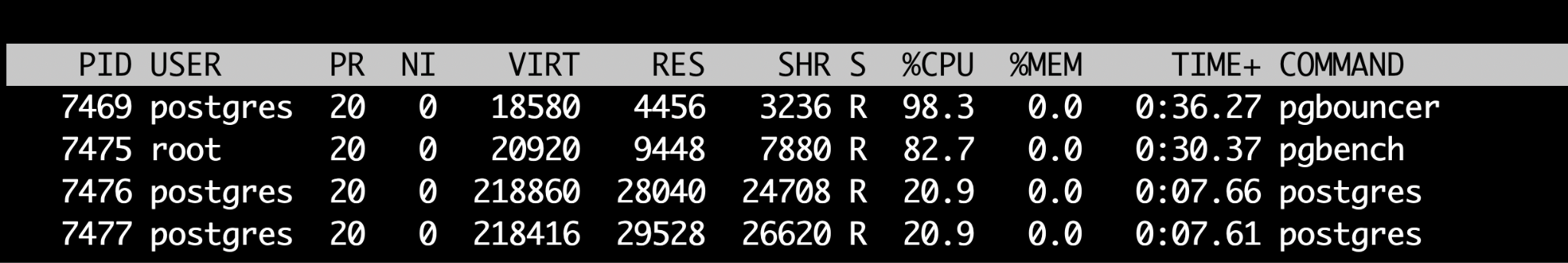

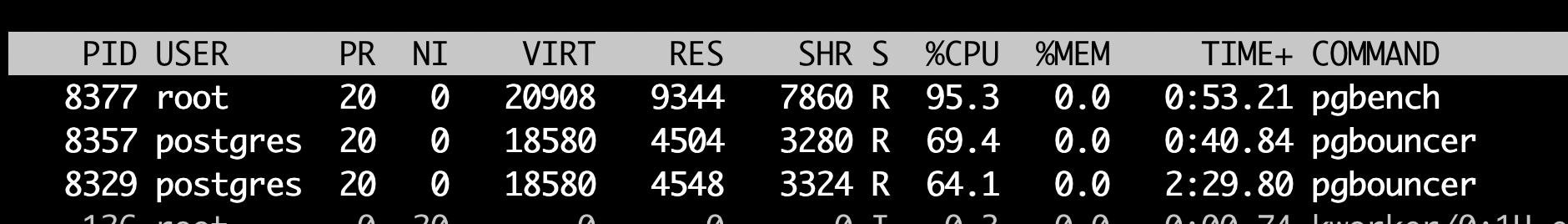

Monitor the CPU usage (with and without multiple instances)

In above tests we also captured the cpu utilization during our benchmark tests

With 1 instance you can see that cpu is hitting the limit for pgbouncer(99% usage) . You can keep monitoring the CPU usage to know if you will benefit from adding more instances

With 2 instances you can see that cpu usage is better and both of them are using around 64 and 69 %

Managing multiple pgbouncer instances might not be easy

You have seen one scenario where this feature can help you . Please note that managing multiple pgbouncer instances might not be easy . For example , If you have 10 pgbouncer instances running on the same server – How do you monitor all the 10 instances ? How do you manage the logs of all the 10 instances etc..

FAQs

It depends on your use case. Your server configuration , concurrency requirements , workload etc will influence the result . Please benchmark your use case and arrive at the right number of instances. Try not to add more instances unless you really need them.

Please keep monitoring the CPU usage (as shown above) to see how your pgbbouncer instances are being used.

This is a simple script that can help you in launching multiple instances . We tested it on Ubuntu 20 and it should work on CentOs as well . It is a basic script which needs to be enhanced to suit your requirements

Yes. Based on user feedback we plan to customize and enhance it

On some operating systems, this allows running multiple PgBouncer instances on the same host listening on the same port and having the kernel distribute the connections automatically.

Since version 1.12, PgBouncer supports the SO_REUSEPORT socket option